Data Migration: Expectation vs. Reality

A deep-dive into the reality of the data migration process and how Infosistema’s DMM will help you get it done in only a few minutes

More often than not, whenever an OutSystems professional faces the challenge of data migration between environments their first impulse is “I’ll do it by myself. It should just be a couple of scripts."

Although possible, in this article we will explain why the expectation of doing the data migration by yourself is unrealistic and how Infosistema’s DMM solution can help you migrate data without facing any of the problems you would normally encounter.

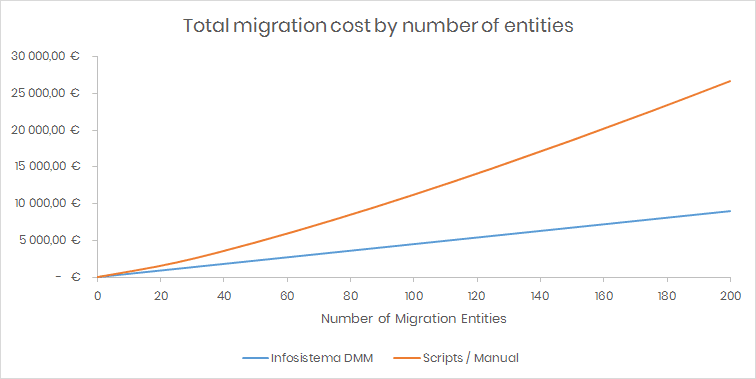

You’ll face higher costs

This is the first common problem a migrator will find, and the most unpleasant pitfall a customer will encounter. It is a tough pill to swallow because the human mind is not prepared for predicting exponential growth.

The team performing the migration first makes an initial estimate based on the number of entities needed to be migrated. They may even account for specific risk factors. But as the migration complexity grows exponentially with the number of entities—because of the number of relations, constraints, circular dependencies, unique fields, etc.—the team will need to put in more effort for each entity that is being added to the migration.

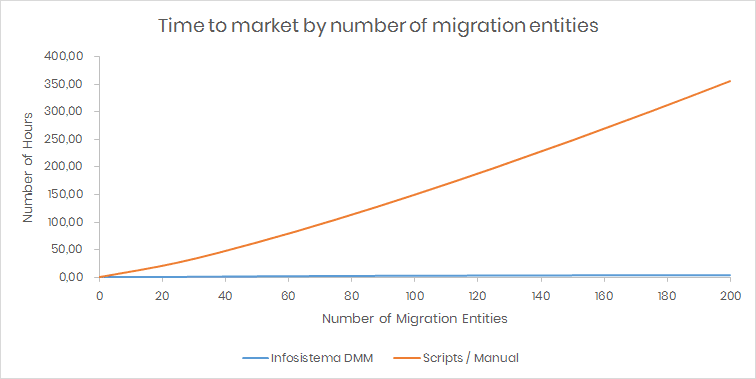

Time to market will increase

OutSystems customers are used to agile development and prototyping quickly. Why is data migration so different?

Let’s try a thought experiment. Think about how much time it takes to produce a script for migrating a single table. For that number, account for the time to test and execute it. Keep that number in your mind. What if we say the table has several Foreign Keys (FK), will they also be migrated? If not, what will happen to the destination data? Now, let’s say some of the fields are mandatory or unique. What if some of the FKs are also mandatory and unique or if some of the FKs point to OutSystems entities, like Users, Roles, or eSpaces?

All of this time is spent analyzing, building a script, and testing for a single table. However, two tables won’t take twice the time. Each table must be accounted for together, because they may have dependencies between them, some of which may even be circular dependencies! That’s when exponential growth creeps in and consumes all of the time you’ve allocated to the migration team. And, as you know, time is money, which means the cost to migrate your data will get even higher.

Furthermore, let’s assume that development won’t stop and migration must be implemented in parallel. How will you handle changes on entities whose script was created weeks ago? How will the inclusion of the new field be handled on the migration scripts? How will your team handle this new challenge?

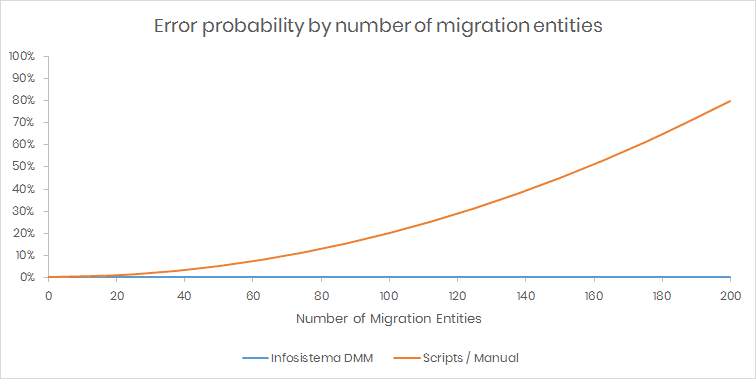

There is a greater probability for errors

Data migration should not be taken lightly. A problem with a script execution could be as disastrous as breaking your entire destination environment.

Your migration script might have changed some flag on some field and now your application won’t start or the environment started behaving erratically after the migration. Everyone who has worked in data migration has gone through all of these kinds of problems that only appear during execution. The team will start saying things like “It’s Murphy’s Law" or “I don’t get it. It was working nice on my machine." Assuming you have a backup, you’ll have to restore it along with all of the hassle that involves.

The reason why errors are more common is not only because of the exponential growth but also because of the time to deliver the migration scripts. During this time, the development team will keep creating entities that tie-in with the entities being migrated, removing some fields, or changing their data type or size, maybe even moving entities from one eSpace to another. However, they generally “forget" to inform the migration team of those changes, causing scripts to fail and potentially starting a non-productive blame game.

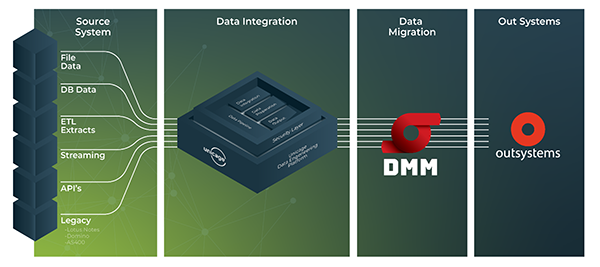

How Data Migration Manager (DMM) solves your data migration troubles

Regarding the problem of higher costs, with Infosistema’s DMM solution cost grows linearly with complexity and it can be even cheaper if you take into account that you can further reduce the complexity by breaking the migration into smaller logical chunks.

Now, let’s get back to the time-to-market issue. With Data Migration Manager, time to deploy is measured at a much smaller time scale. You install it from the forge, setup the database connections and a new migration configuration, and within minutes you’re watching your data peacefully flow between environments.

Last but not least, regarding the probability for errors, the first thing you need to know is that DMM is a product. That doesn’t mean that it is completely error-free, but we can assure you that we work on continuous improvements and fixes. Therefore, in the improbable situation that your data structures have some strange corner case not already handled by DMM, the product will eventually grow to also support that case. This way all customers will benefit.

Key Takeaways

As you can see, data migration is so much more than just “handling a couple of scripts.” The reality is that migrating data all by yourself can be complicated to say the least).

All of the problems mentioned above only take into account the simple migration of data between environments. If you also need data anonymization, data filtering, unmanned execution, and so on, you’re just adding layers of complexity to an already complex scenario.

Infosistema’s DMM will handle all of this and much more for you. So, what are you waiting for? Learn more about DMM here.